The internet and pornography: Prime Minister calls for action

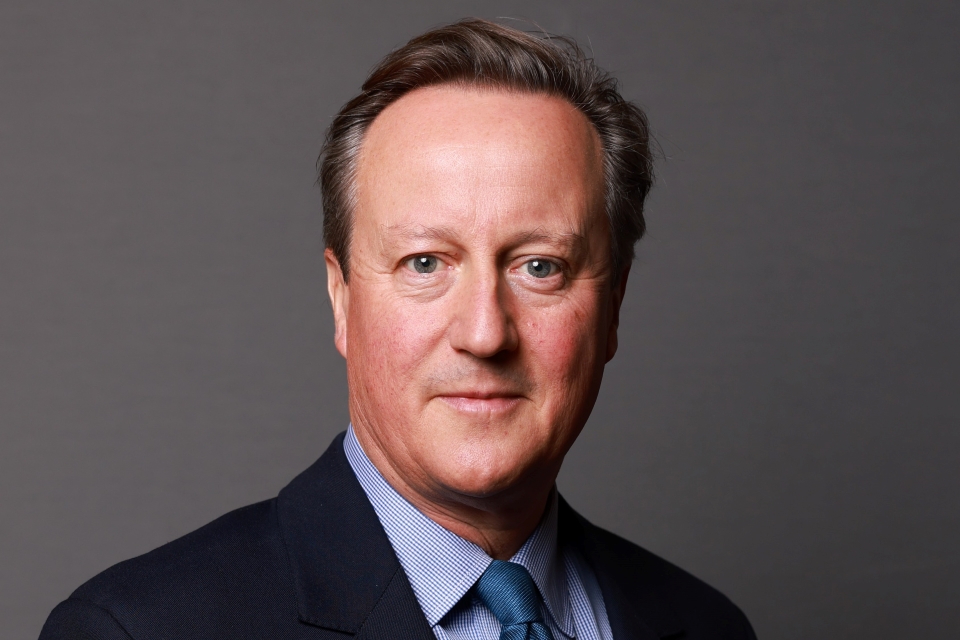

David Cameron made a speech about cracking down on online pornography and making the internet safer for children on 22 July 2013.

Thank you to the NSPCC for hosting me today and thank you for all the amazing work you do for Britain’s children.

Today I am going to tread into territory that can be hard for our society to confront. It is frankly difficult for politicians to talk about, but I believe we need to address as a matter of urgency.

I want to talk about the internet, the impact it’s having on the innocence of our children, how online pornography is corroding childhood and how, in the darkest corners of the internet, there are things going on that are a direct danger to our children and that must be stamped out. Now, I’m not making this speech because I want to moralise or scaremonger but because I feel profoundly, as a politician and as a dad, that the time for action has come. This is, quite simply, about how we protect our children and their innocence.

Now, let me be very clear right at the start: the internet has transformed our lives for the better. It helps liberate those who are oppressed, it allows people to tell truth to power, it brings education to those previously denied it, it adds billions to our economy, it is one of the most profound and era‑changing inventions in human history.

But because of this, the internet can sometimes be given a sort of special status in debate. In fact, it can almost be seen as beyond debate, that to raise concerns about how people should access the internet or what should be on it, is somehow naïve or backwards looking. People sometimes feel they’re being told almost the following: that an unruled internet is just a fact of modern life; any fallout from that is just collateral damage and that you can as easily legislate what happens on the internet as you can legislate the tides.

And against this mind-set, people’s, most often parents’, very real concerns get dismissed. They’re told the internet is too big to mess with; it’s too big to change. But to me, the questions around the internet and the impact it has are too big to ignore. The internet is not just where we buy, sell and socialise; it’s where crimes happen; it’s where people can get hurt; it’s where children and young people learn about the world, each other, and themselves.

The fact is that the growth of the internet as an unregulated space has thrown up 2 major challenges when it comes to protecting our children. The first challenge is criminal and that is the proliferation and accessibility of child abuse images on the internet. The second challenge is cultural; the fact that many children are viewing online pornography and other damaging material at a very early age and that the nature of that pornography is so extreme it is distorting their view of sex and relationships.

Now, let me be clear, the 2 challenges are very distinct and very different. In one we’re talking about illegal material, the other is legal material that is being viewed by those who are underage. But both the challenges have something in common; they’re about how our collective lack of action on the internet has led to harmful and, in some cases, truly dreadful consequences for children.

Now, of course, a free and open internet is vital. But in no other market and with no other industry do we have such an extraordinarily light touch when it comes to protecting our children. Children can’t go into the shops or the cinema and buy things meant for adults or have adult experiences; we rightly regulate to protect them. But when it comes to the internet, in the balance between freedom and responsibility we’ve neglected our responsibility to children.

My argument is that the internet is not a side-line to real life or an escape from real life, it is real life. It has an impact on the children who view things that harm them, on the vile images of abuse that pollute minds and cause crime, on the very values that underpin our society. So we’ve got to be more active, more aware, more responsible about what happens online. And when I say we I mean we collectively: governments, parents, internet providers and platforms, educators and charities. We’ve got to work together across both the challenges that I’ve set out.

So let me start with the criminal challenge, and that is the proliferation of child abuse images online. Obviously we need to tackle this at every step of the way, whether it’s where the material is hosted, transmitted, viewed or downloaded. And I am absolutely clear that the state has a vital role to play here.

The police and CEOP, that is the Child Exploitation and Online Protection Centre, are already doing a good job in clamping down on the uploading and hosting of this material in the UK. Indeed, they have together cut the total amount of known child abuse content hosted in the UK from 18% of the global total in 1996 to less than 1% today. They’re also doing well on disrupting the so-called hidden internet, where people can share illegal files and on peer‑to‑peer sharing of images through photo-sharing sites or networks away from the mainstream internet.

Once CEOP becomes a part of the national Crime Agency, that will further increase their ability to investigate behind the pay walls, to shine a light on this hidden internet and to drive prosecutions and convictions of those who are found to use it. So we should be clear to any offender who might think otherwise, there is no such thing as a safe place on the internet to access child abuse material.

But government needs to do more. We need to give CEOP and the police all the powers they need to keep pace with the changing nature of the internet. And today I can announce that from next year we’ll also link up existing fragmented databases across all police forces to produce a single, secure database of illegal images of children which will help police in different parts of the country work together more effectively to close the net on paedophiles. It will also enable the industry to use digital hash tags from the database to proactively scan for, block and take down those images wherever they occur. Otherwise you have different police forces with different databases; you need one set of all the hash tags, all the URLS, in one place for everybody to use.

Now, industry has agreed to do exactly that because this isn’t just a job for government. The internet service providers and the search engine companies have a vital role to play and we’ve already reached a number of important agreements with them. A new UK-US taskforce is being formed to lead a global alliance with the big players in the industry to stamp out these vile images. I’ve asked Joanna Shields, CEO of Tech City and our business ambassador for digital industries, who is here today, to head up engagement with industry for this taskforce. And she’s going to work both with the UK and US governments and law enforcement agencies to maximise our international efforts.

Here in Britain, Google, Microsoft and Yahoo are already actively engaged on a major campaign to deter people who are searching for child abuse images. Now, I can’t go into the detail about this campaign, because that might undermine its effectiveness, but I can tell you it is robust, it is hard-hitting; it is a serious deterrent to people who are looking for these images.

Now, where images are reported they are immediately added to a list and they’re blocked by search engines and ISPs so people can’t access those sites. These search engines also act to block illegal images and the URLs, or pathways, that lead to these images from search results, once they’ve been alerted to their existence.

But here to me is the problem. The job of actually identifying these images falls to a small body called the Internet Watch Foundation. Now this is a world leading organisation, but it relies almost entirely on members of the public reporting things they’ve seen online.

So the search engines themselves have a purely reactive position. When they’re prompted to take something down they act, otherwise they don’t. And if an illegal image hasn’t been reported it can still be returned in searches. In other words, the search engines are not doing enough to take responsibility. Indeed, in this specific area, they are effectively denying responsibility.

And this situation has continued because of a technical argument. It goes like this: the search engine shouldn’t be involved in finding out where these images are because the search engines are just the pipe that delivers the images, and that holding them responsible would be a bit like holding the Post Office responsible for sending illegal objects in anonymous packages. But that analogy isn’t really right, because the search engine doesn’t just deliver the material that people see, it helps to identify it.

Companies like Google make their living out of trawling and categorising content on the web, so that in a few key strokes you can find what you’re looking for out of unimaginable amounts of information. That’s what they do. They then sell advertising space to companies based on your search patterns. So if I go back to the Post Office analogy, it would be like the Post Office helping someone to identify and then order the illegal material in the first place and then sending it on to them, in which case the Post Office would be held responsible for their actions.

So quite simply we need the search engines to step up to the plate on this issue. We need a situation where you cannot have people searching for child abuse images and being aided in doing so. If people do try and search for these things, they are not only blocked, but there are clear and simple signs warning them that what they are trying to do is illegal, and where there is much more accountability on the part of the search engines to help find these sites and block them.

On all of these things, let me tell you what we’ve already done and what we’re going to do. What we’ve already done is insist that clear, simple warning pages are designed and placed wherever child abuse sites have been identified and taken down so that if someone arrives at one of these sites they are clearly warned that the page contained illegal images. These so-called splash pages are up on the internet from today and this is, I think, a vital step forward. But we need to go further.

These warning pages should also tell people who’ve landed on these sites that they face consequences like losing their job, losing their family or even access to their children if they continue. And vitally they should direct them to the charity Stop it Now! which can help people change their behaviour anonymously and in complete confidence.

On people searching for these images, there are some searches where people should be given clear routes out of that search to legitimate sites on the web. Let me give you an example. If someone is typing in ‘child’ and ‘sex’ there should come up a list of options: do you mean child sex education? Do you mean child gender? What should not be returned is a list of pathways into illegal images which have yet to be identified by CEOP or reported to the Internet Watch Foundation.

Then there’s this next issue. There are some searches which are so abhorrent and where there could be no doubt whatsoever about the sick and malevolent intent of the searcher – terms that I can’t say today in front of you with the television cameras here, but you can imagine – where it’s absolutely obvious the person at the keyboard is looking for revolting child abuse images. In these cases, there should be no search results returned at all. Put simply, there needs to be a list of terms – a blacklist – which offer up no direct search returns.

So I have a very clear message for Google, Bing, Yahoo! and the rest: you have a duty to act on this, and it is a moral duty. I simply don’t accept the argument that some of these companies have used to say that these searches should be allowed because of freedom of speech.

On Friday, I sat with the parents of Tia Sharp and April Jones. They want to feel that everyone involved is doing everything they can to play their full part in helping rid the internet of child abuse images. So I’ve called for a progress report in Downing Street in October with the search engines coming in to update me.

And the question we’ve asked is clear. If CEOP give you a blacklist of internet search terms, will you commit to stop offering up any returns on these searches? If the answer is yes, good. If the answer is no and the progress is slow or non-existent, I can tell you we’re already looking at legislative options so that we can force action in this area.

There’s one further message I have for the search engines. If there are technical obstacles to acting on this, don’t just stand by and say nothing can be done, use your great brains to overcome them. You’re the people who’ve worked out how to map almost every inch of the earth from space. You’ve designed algorithms to make sense of vast quantities of information. You’re the people who take pride in doing what they say can’t be done.

You hold hackathons for people to solve impossible internet conundrums. Well hold a hackathon for child safety. Set your greatest brains to work on this. You’re not separate from our society, you’re part of our society and you must play a responsible role within it. This is quite simply about obliterating this disgusting material from the net, and we should do whatever it takes.

So that’s how we’re going to deal with the criminal challenge. The cultural challenge is the fact that many children are watching online pornography and finding other damaging material online at an increasingly young age. Now young people have always been curious about pornography; they’ve always sought it out.

But it used to be that society could protect children by enforcing age restrictions on the ground; whether that was setting a minimum age for buying top-shelf magazines, putting watersheds on the TV or age rating films and DVDs. But the explosion of pornography on the internet, and the explosion of the internet into our children’s lives, has changed all of that profoundly. It’s made it much harder to enforce age restrictions. It’s made it much more difficult for parents to know what’s going on. And as a society we need to be clear and honest about what is going on.

For a lot of children, watching hard-core pornography is in danger of becoming a rite of passage. In schools up and down our country, from the suburbs to the inner city, there are young people who think it’s normal to send pornographic material as a prelude to dating in the same way you might once have sent a note across the classroom.

Over a third of children have received a sexually explicit text or email. In a recent survey, a quarter of children said they had seen pornography which had upset them. This is happening, and it is happening on our watch as adults. And the effect that it can have can be devastating. Effectively our children are growing up too fast. They’re getting distorted ideas about sex and being pressurised in a way that we’ve never seen before, and as a father I am extremely concerned about this.

Now there’s some who might say, ‘Well, it’s fine for you to have a view as a parent but not as Prime Minister. This is – this is an issue for parents not the state.’ But the way I see it, there is a contract between parents and the state. Parents say, ‘Look, we’ll do our best to raise our children right and the state should agree to stand on our side, to make that job a bit easier not a bit harder.’

But when it comes to internet pornography, parents have been left too much on their own. And I’m determined to put that right. We all need to work together, both to prevent children from accessing pornography and educate them about keeping safe online. This is about access and it’s about education. And I want to say briefly what we’re doing about each.

On access, things have changed profoundly in recent years. Not long ago access to the internet was mainly restricted to the PC in the corner of the living room with a beeping dial-up modem – we all remember the worldwide wait – it was downstairs in the house where parents could keep an eye on things. But now the internet is on the smartphones, the laptops, the tablets, the computers, the games consoles. And with high speed connections that make movie downloads and real time streaming possible, parents need much, much more help to protect their children across all of these fronts.

So on mobile phones, it’s great to report that all of the operators have now agreed to put adult content filters onto phones automatically. And to deactivate them you have to prove you’re over 18 and operators will continue to refine and improve those filters.

On public wi-fi, of which more than 90% is provided by 6 companies – O2, Virgin Media, Sky, Nomad, BT and Arquiva – I’m pleased to say we’ve now reached an agreement with all of them that family friendly filters are to be applied across public wi-fi networks wherever children are likely to be present. This will be done by the end of next month. And we’re keen to introduce a family friendly wi-fi symbol which retailers, hotels, transport companies can use to show that their customers – use to show their customers that their public wi-fi is properly filtered. So I think good progress there; that’s how we’re protecting children outside the home.

Inside the home, on the private family network, it is a more complicated issue. There’s been a big debate about whether internet filters should be set to a default ‘on’ position, in other words with adult content filters applied by default, or not. Let’s be clear, this has never been a debate about companies or government censoring the internet, but about filters to protect children at the home network level.

Those who wanted default ‘on’ said, ‘It’s a no-brainer: just have the filter set to ‘on’, then adults can turn them off if they want to and that way we can protect all children whether their parents are engaged in internet safety or not.’ But others said default ‘on’ filters could create a dangerous sense of complacency. They said that with default filters parents wouldn’t bother to keep an eye on what their kids are watching, as they’d be complacent; they’d just assume the whole thing had been taken care of.

Now, I say we need both: we need good filters that are preselected to be on, pre-ticked unless an adult turns them off, and we need parents aware and engaged in the setting of those filters. So, that is what we’ve worked hard to achieve, and I appointed Claire Perry to take charge of this, for the very simple reason that she’s passionate about this issue, determined to get things done and extremely knowledgeable about it at the same too. Now, she’s worked with the big 4 internet service providers – TalkTalk, Virgin, Sky and BT – who together supply internet connections to almost 9 out of 10 homes.

And today, after months of negotiation, we’ve agreed home network filters that are the best of both worlds. By the end of this year, when someone sets up a new broadband account, the settings to install family friendly filters will be automatically selected; if you just click next or enter, then the filters are automatically on.

And, in a really big step forward, all the ISPs have rewired their technology so that once your filters are installed they will cover any device connected to your home internet account; no more hassle of downloading filters for every device, just one click protection. One click to protect your whole home and to keep your children safe.

Now, once those filters are installed it should not be the case that technically literate children can just flick the filters off at the click of the mouse without anyone knowing, and this, if you’ve got children, is absolutely vital. So, we’ve agreed with industry that those filters can only be changed by the account holder, who has to be an adult. So an adult has to be engaged in the decisions.

But of course, all this only deals with the flow of new customers, new broadband accounts, those switching service providers or buying an internet connection for the first time. It doesn’t deal with the huge stock of the existing customers, almost 19 million households, so that is where we now need to set our sights.

Following the work we’ve already done with the service providers, they have now agreed to take a big step: by the end of next year, they will have contacted all their existing customers and presented them with an unavoidable decision about whether or not to install family friendly content filters. TalkTalk, who’ve shown great leadership on this, have already started and are asking existing customers as I speak.

We’re not prescribing how the ISPs should contact their customers; it’s up to them to find their own technological solutions. But however they do it, there’ll be no escaping this decision, no, ‘Remind me later,’ and then it never gets done. And they will ensure that it’s an adult making the choice.

Now, if adults don’t want these filters that is their decision, but for the many parents who would like to be prompted or reminded, they’ll get that reminder and they’ll be shown very clearly how to put on family friendly filters. I think this is a big improvement on what we had before and I want to thank the service providers for getting on board with this, but let me be clear: I want this to be a priority for all internet service providers not just now, but always.

That is why I am asking today for the small companies in the market to adopt this approach too, and I am also asking Ofcom, the industry regulator, to oversee this work, to judge how well the ISPs are doing and to report back regularly. If they find that we’re not protecting children effectively, I will not hesitate to take further action.

But let me also say this: I know there are lots of charities and other organisations which provide vital online advice and support that many young people depend on, and we need to make sure that the filters do not, even unintentionally, restrict this helpful and often educational content. So I’ll be asking the UK Council for Child Internet Safety to set up a working group to ensure this doesn’t happen, as well as talking to parents about how effective they think that these filter products we’re talking about really are.

So, making filters work is one front we’re acting on; the other is education. In the new national curriculum, launched just a couple of weeks ago, there are unprecedented requirements to teach children about online safety. That doesn’t mean teaching young children about pornography; it means sensible, age-appropriate education about what to expect on the internet. We need to teach our children not just about how to stay safe online, but how to behave online too, on social media and over phones with their friends.

And it’s not just children that need to be educated; it’s us parents, too. People of my generation grew up in a completely different world; our parents kept an eye on us in the world they could see. This is still relatively new, a digital landscape, a world of online profiles and passwords, and speaking as a parent, most of us do need help in navigating it.

Companies like Vodafone already do a good job at giving parents advice about online safety; they spend millions on it, and today they’re launching the latest edition of their Digital Parenting guide. They’re also going to publish a million copies of a new educational tool for younger children called, ‘The digital facts of life.’

And I’m pleased to announce something else today: a major new national campaign that is going to be launched in the new year, that is going to be backed by the 4 major internet service providers as well as other child focused companies, that will speak directly to parents about how to keep their children safe online and how to talk to their children about other dangers like sexting or online bullying.

And government is going to play its part, too, because we get millions of people interacting with government. Whether that’s sorting out their road tax or their Twitter account, or soon registering for Universal Credit, I’ve asked that we use these interactions to keep up the campaign, to prompt parents to think about filters and to think about how they can keep their children safe online. This is about all of us playing our part.

So, we’re taking action on how children access this stuff, how they’re educated about it, and I can tell you today we’re also taking action on the content that is online. There are certain types of pornography that can only be described as extreme; I am talking particularly about pornography that is violent and that depicts simulated rape. These images normalise sexual violence against women and they’re quite simply poisonous to the young people who see them.

The legal situation is, although it’s been a crime to publish pornographic portrayals of rape for decades, existing legislation does not cover possession of this material, at least in England and Wales. Possession of such material is already an offence in Scotland, but because of a loophole in the Criminal Justice and Immigration Act 2008 it is not an offence south of the border. But I can tell you today, we are changing that: we are closing the loophole, making it a criminal offence to possess internet pornography that depicts rape.

And we’re going to do something else to make sure that the same rules apply online as they do offline. There are examples of extreme pornography that are so bad you can’t even buy this material in a licensed sex shop, and today I can announce we’ll be legislating so that videos streamed online in the UK are subject to the same rules as those sold in shops. Put simply: what you can’t get in a shop, you shouldn’t be able to get online.

Now, everything today I’ve spoken about comes back to one thing: the kind of society we want to be. I want Britain to be the best place to raise a family; a place where your children are safe, where there’s a sense of right and wrong and proper boundaries between them, where children are allowed to be children.

And all the actions we’re taking today come back to that basic idea: protecting the most vulnerable in our society, protecting innocence, protecting childhood itself. That is what is at stake, and I will do whatever it takes to keep our children safe.

Updates to this page

-

Replaced check against delivery version with transcript.

-

First published.